Archive for the 'Software' category

Windows 98 Game Machine

May 12, 2014 I have been wanting to build a Windows 98 retrobox machine for a long time, since running Windows 98 games in a virtual machine with hardware acceleration that works is next to impossible, and although I know of and have downloaded games from services like Steam and gog.com, they don’t solve the problem of how to install other Windows 98 software that I am interested in using. I also enjoy the visual nuances produced by 3DFX chipsets and drivers which are not emulated by either service.

I have been wanting to build a Windows 98 retrobox machine for a long time, since running Windows 98 games in a virtual machine with hardware acceleration that works is next to impossible, and although I know of and have downloaded games from services like Steam and gog.com, they don’t solve the problem of how to install other Windows 98 software that I am interested in using. I also enjoy the visual nuances produced by 3DFX chipsets and drivers which are not emulated by either service.

The trick is in selecting the right hardware for maximum compatibility and performance. As a starting point, I have chosen the following pieces of hardware to assemble a basic machine:

- Asus P4B Motherboard with 512 MB of RAM

- SoundBlaster 128 PCI

- Radeon 9600 Pro PCI with 128 MB of VRAM

- DFE 538-TX PCI Network Card

- SD Card to IDE Interface Board

The last item may need a bit of explaining. Basically, I don’t want to deal in hard drives anymore; I want a technology which I can use to easily backup and recover software images for some of the older machines I maintain. I also want something a little less noisy and faster than a typical hard drive used the in early 2000-2006 period for Windows 98 installations. After some research, I have ordered a unit or two off of eBay a few weeks ago for just a few dollars. I want to use just one or two to trial the hardware, since I have never used these boards before and I don’t know what their limitations are. I have been using the board now for a few weeks and it seems to be performing nicely. I really enjoy the ability to remove the “drive”, shove it into an SD card reader, add a few files which would have been otherwise tedious to download via the platform’s ancient Firefox installation (I am using the latest for the Window 98SE platform, which is version 2.0.12 I think).

A warning around RAM and Windows 98SE installations. I began this process with 1 GB of RAM and throughout the process of getting my benchmark games up and running and the platform driver installations ironed out, I was plagued by mysterious problems like random crashes, screen freezing, DirectX audio issues, and out of memory errors. The out of memory errors happened less frequently, unfortunately, which made debugging the issue rather difficult, since it appeared that my sound card drivers were the source of the problems and not the onboard memory. In the end, it was due to the amount of memory I had installed on that motherboard several months ago. Once I removed the “extra” 512 MB of RAM, things just started working flawlessly. I have done a bit of research and it seems that certain hardware and software problems within the OS can trigger these issues, but it is still possible to enjoy 1 GB of memory on some installations and OS configurations, even though the operating system may not use it. In general, if you have a Window ME installation available, then use that as it seems to be a more robust Windows 98 era platform.

The games I have tested so far are Deus Ex and System Shock 2; there are issues surrounding the latter within my new installation, which I will get into in a later post.

Categories: Games, Retro, Software, Windows

No Comments »

Vectrex Emulator: ParaJVE

April 26, 2012This is a really sweet emulator from an author who seems to love the Vectrex experience. He even went through the trouble to add in the typical electrical noise you hear coming from the speaker — that feature can be disabled, if desired. Not too shabby for the Vectrex fans out there.

Categories: Software, Vectrex

No Comments »

Microsoft Quick C Compiler

December 21, 2010 When I first came in contact with this compiler, I was just starting high school and eager for the challenges ahead (except for the material which didn’t interest me — basically non-science courses). When I went to pick the courses for the year, I noticed a couple which taught computer programming. The first course, which was a pre-requisite for the second, taught BASIC while the second course taught C programming. At this point in my life, I was an old hand at BASIC, so I basically breezed through first programme. The second course intrigued me much more. I was familiar with C programming from my relatively brief experience with the Amiga, but I had a lot left to learn. My high school didn’t use the Lattice C compiler, but a Microsoft C compiler instead. I located the gentleman who taught the course and he pointed me to a book called Microsoft C Programming for the PC written by Robert LaFore and the Microsoft QuickC Compiler software. I had a job delivering newspapers at the time, so I could just barely afford the book using salary and tips saved from two weeks doing hard time ($50 at the time), but the compiler was just too expensive. So I did what any highly effective teenager would do, basically I dropped really big hints around the house (including the location and price of the compiler package I wanted) until my parents purchased a copy for me on my birthday.

When I first came in contact with this compiler, I was just starting high school and eager for the challenges ahead (except for the material which didn’t interest me — basically non-science courses). When I went to pick the courses for the year, I noticed a couple which taught computer programming. The first course, which was a pre-requisite for the second, taught BASIC while the second course taught C programming. At this point in my life, I was an old hand at BASIC, so I basically breezed through first programme. The second course intrigued me much more. I was familiar with C programming from my relatively brief experience with the Amiga, but I had a lot left to learn. My high school didn’t use the Lattice C compiler, but a Microsoft C compiler instead. I located the gentleman who taught the course and he pointed me to a book called Microsoft C Programming for the PC written by Robert LaFore and the Microsoft QuickC Compiler software. I had a job delivering newspapers at the time, so I could just barely afford the book using salary and tips saved from two weeks doing hard time ($50 at the time), but the compiler was just too expensive. So I did what any highly effective teenager would do, basically I dropped really big hints around the house (including the location and price of the compiler package I wanted) until my parents purchased a copy for me on my birthday.

There are a number of differences between the BASIC and C programming languages. One of the more obscure differences lies in how the C programming language deals with special variables that can hold memory addresses. These variables are called pointers and are an integral part of the syntax and functionality of the language. BASIC did have a few special functions which could accept and address locations in memory – I’m thinking of the CALL and USR functions specifically, although there were others. However, a variable holding an address was the same as one holding any other number since BASIC lacked the concept of strong types. The grammar of the C language is also much more complex than BASIC; it had special characters and symbols to express program scope and perform unary operations, which introduced me to the concept of coding style. When a programmer first learns a particular style of coding, it can turn into a religion, but I hadn’t really been exposed to the language long enough to form an opinion. That would come later, and then be summarily discarded once I had more experience.

There were libraries of all sorts which provided functionality for working with strings, math functions, standard input and output, file functions, and so on. At the time, I thought C’s handling of strings (character data) was incredibly obtuse. Basically, I thought the need to manage memory was a complete nuisance. BASIC never required me to free strings after I had declared them, it just took care of it for me under the hood. Despite the coddling I received, I was familiar with the concept of array allocations since even BASIC had the DIM command which dimensioned array containers; re-allocation was also somewhat familiar because of REDIM. However, there were many more functions and parameters in C related to memory management, and I just thought the whole bloody thing was a real mess. The differences between heap and stack memory confused me for a while.

There were many features of the language and compiler I did enjoy, of course. Smaller and snappier programs were a huge benefit to the somewhat sluggish software produced by the QuickBASIC compiler and the BASIC interpreter. The compiled C programs didn’t have dependencies on any run-time libraries either, even though there was probably a way to statically link the QuickBASIC modules together. Pointers were powerful and were loads of fun to use in your programs, especially once I learned the addresses for video memory which introduced me to concepts like double buffering when I began learning about animation. Writing directly to video memory sounds pretty trivial to me right now, but it was so intoxicating at the time. I was more involved in game programming by then and these techniques allowed me to expand into areas I never considered. It allowed for flicker-free animation, lightning fast ASCII/ANSI window renderings via my custom text windowing library, and special off-screen manipulations that allowed me to easily zip buffers around on the screen. A number of interesting text rendering concepts came from a book entitled Teach Yourself Advanced C in 21 Days by Bradley L. Jones, which is still worth reading to this day.

At around this time, I also started to learn about serial and network communications. The latter didn’t happen until my last year at high school. Basically, I wanted to learn how to get my computers to talk to one another. It all started when I became enchanted by the id Software game called DOOM, which allowed you to network a few machines together and play against each other in a vicious winner takes all death-match style combat. Incidentally, games like Doom, Wolfenstein 3D, or Blake Stone: Aliens of Gold led me down another long-winding path: 3D graphics, but that didn’t happen until a few months later. Again, the book store came to the rescue by providing me with a book entitled C Programmer’s Guide to Serial Communications by Joe Campbell. I was somewhat familiar with programming simple software which could use a MODEM for communication, since BASIC supported this functionality through the OPEN function, but I knew very little about the specifics. Once I dug into the first few chapters, I knew that was all going to change.

Categories: DOS, Programming, Reflections, Software

No Comments »

Dissecting DOSBox

December 20, 2010If you’re a gamer and have been for years, then you’ve probably heard of and quite possibly used DOSBox. If you haven’t, then let me introduce it to you. DOSBox is great little program for running all of your favorite classic games. Games which were originally built for monitors and video cards which since been retired, and legacy audio systems like SoundBlaster 16, Audio Galaxy, or Gravis Ultrasound. Specifically, it supports games and programs which were written for the MS-DOS or compatible operating system. Although, the software specializes in supporting games, you may have success in running other programs. Although it doesn’t make any guarantees regarding these legacy applications, which can require different features provided by unsupported drivers, I have had success in running complex software like the DJGPP compiler but run into a bit of trouble when running an older version of the TDE (Thomson-Davis Editor).

I’ll wait here while you go and download your copy of the source code…

Now that you’ve downloaded DOSBox source and presumably unpacked it, you are ready to get your hands dirty. This isn’t going to be an article about how to use DOSBox, but rather, how does it work under the hood, exactly? What are the major software gears and wheels used for handling such programs? Why don’t all of your favourite games have 100% compatibility?

The first thing to understand about DOSBox is that it’s an emulator for x86 CPU instructions, floating point unit instructions, and various functions within MS-DOS compatible operating systems. Specifically, it emulates functions around Interrupt 21H (hexadecimal) and a couple around 20H, 25H, 26H, and 27H. It also installs an NULL handler for interrupts 28H and 29H which do nothing. But before we get ahead of ourselves, let’s take a step back and look at the various modules that make up the program.

CPU Emulation. At the heart of any emulation program is the CPU emulation core. Every program (.EXE or .COM file) on your DOS powered computer contains machine code and data. When using DOSBox, it’s the CPU emulator’s job to process that machine code; therefore, each program that is teased apart and executed by DOSBox arrives at this sacred chunk of memory sooner or later.

DOSBox can be configured to emulate the x86 core in a few different ways. Therefore, when we talk about emulation for CPU cores, we’re either talking about emulating each instruction found in the program one at a time, or the emulator will choose to batch process these instructions and operands and translate them to native instructions for direct execution on the host CPU (they call this mode ‘dynamic’). This direct execution mode can provide into better performance on some machines, but may be slower on others so the original emulation mode is still available.

Emulation can be a little confusing if you’ve never heard of it before, so let’s go over it once again. An executable program contains a bunch or binary data representing machine operation codes (or op codes), operands (arguments to that opcode), and data. This information can be fed to your CPU by your operating system, or they can be read by another program, just like any other file, and interpreted one byte at a time. In the last case, it would be the emulation program which decides how to implement the functionality of the CMPSB instruction (used for comparing bytes in memory), for example, rather than the CPU providing the hardware implementation. It’s this act of interpretation that differentiates emulation from direct execution on the host processor.

FPU emulation. In the world of electronic gaming, the software powering those fantastic explosions, shattering those fragile glass windows, and hurling those flying projectiles often need to do a series of calculations to determine values for acceleration, direction, and manipulating various bits of trigonometry. These calculations can involve irrational numbers like PI (3.141592654…), which I’m sure you all remember from school. In programming terms, these numbers are often stored in variables which follow a standard method of encoding the information about the significand and the exponent (along with the sign of the number); one such standard is IEEE 754. Let’s not get too mired in the intricacies of how floating points are stored, which is quite boring after all and not conducive to an interesting read on a Friday afternoon. Instead, let’s evade this topic and push on to describing operation codes in which floating point numbers are used as operands (parameters or arguments to a function). In this case, these op codes may need to be emulated just like the ones used by the CPU. Only this time, if the encoding standard differs from the host processor (in other words, if it doesn’t use IEEE 754 and you’re using a PC to run DOSBox), then the emulator will need to convert that format into something it can use natively, which an expensive operation (in terms of adding processing cycles and increasing execution time).

The take away piece of information for all this is that floating point emulation can be slow in the worst case scenario, and fast in the best case. In either case, it’s not a good topic at parties so let’s just slowly walk away from it…

Hardware emulation. This is certainly one of the more interesting layers in DOSBox and also the most prone to hacks and tweaks within the source code. The science of taking an analog output and reproducing it digitally is prone to approximations, since the nature of analog is approximate and being digital is exactly the opposite. Most of the analog operations come from sound cards where the device is capable of producing a variety of analog wave forms which is used to create music and sound effects.

The operating system layer. Before the days of operating systems employing graphical user interfaces to shield the user from arcane console commands, and providing a host of time wasting games like Solitaire and Mind-sweeper, a sizable chunk of the PC market used DOS. Whether that was PC-DOS, MS-DOS, or DR-DOS is not really that important since the other versions typically remained closely compatible with MS-DOS. The DOS platform offered a host of utility programs to the user, along with a few drivers which included support for specific implementations for memory management, mouse drivers, and access to generic CD-ROM drives. Users could always install a specific version of a driver for piece of hardware they bought, like a Sound Blaster card, and after setting a jumper of two to configure interrupt and DMA channels, they would be off to the races. Unless something went horribly wrong…

Unbeknownst to many users but knownst to a few geeks around the Megaverse, the motherboard BIOS code provides a set of default drivers so that the boot sequence and the operating system can access a few essential devices like the hard drive, floppy drive, and keyboard when they start up. These drivers are usually ignored or replaced by the operating system so that it can provide its own, more advanced versions, but some of them are still used when your Windows operating system boots into safe mode, for example. The kernel, which is a core component of any operating system, provides mechanisms for switching between drives, accessing disks and partitions, and in the case of DOS, providing fixed names for devices like “LPT1:” (printer), “COM1:” (communications port), or “NUL:” (the abyss). These device names and indeed the drivers themselves provided a level of abstraction for the user and higher-level programs. The user could print a text file, for example, by issuing a command like “TYPE FILE.TXT > LPT1:” directly as a shell command, but they could also use a program like WordPerfect which has its own set of specialized printer drivers, so that it could do more tasks requiring advanced printing like graphics and italicized or bold lettered text.

DOSBox provides limited support for a few of these commands, but really it’s only enough to get your games up and running since that is its modus operandi after all. These commands can take one of two forms: an executable program or a keyword command available in the shell. I provide the list of available keyword commands in the Shell section below.

The interrupt layer. Much of the hard work when creating DOSBox probably rose up from the requirements around the CPU/FPU emulation, DOS and hardware abstraction support, and the support for interrupts. The bulk of the core system code for the DOS lies in supporting the features for every required interrupt function. Interrupts are specific routines which can accept parameters from the calling program and then return the results of the function in special variables. All of this happens by loading up certain register variables and invoking the interrupt CPU instruction. If you were to embed a small assembler routine into a C function, it may look like this:

void mouse_hide(void)

{

asm {

mov ax,02h;

int 33h;

}

}In this example, the value “02H” is being loaded into the AX register which represents the interrupt function to hide the mouse cursor, and the interrupt “33H” is an entry in the interrupt vector table to access available mouse functions (it acts like and index). DOSBox supports many interrupt functions, but their focus is around the ones necessary to run your favourite games. The important thing to remember about interrupts is that they do exactly that, they interrupt the CPU and force it to run the requested function.

Without going into too many technical details, interrupt authors generally follow two design rules: the functions must execute quickly and you shouldn’t call an interrupt from within another interrupt. The programmers working on DOSBox need to implement those interrupt functions in whatever way makes the most amount of sense on the running platform. So, if the game invokes an interrupt requesting a change of screen resolution and color mode, then the DOSBox emulator needs to adjust the resolution of the game window and invoke software support for VGA and EGA video modes, or a nice CGA video mode with a four-colour palette. Pretty.

The abstract front-end layer. This would be the charming side of DOSBox, if the project actually provided a graphical user interface out of the box. Instead, they have designed it one level deeper and abstracted the program’s front-end so that it could use different media and windowing libraries provided by the host’s operating system. By default, it uses the SDL library (SDL stands for Simple DirectMedia Layer) to handle the creation of the application container, window frame, sound, input functions and graphics modes. And lucky for them, SDL is available for Linux, Mac OS X, and Windows (and varied support for other platforms too), so there may never be a reason to move to a different library… until the SDL project is retired or if their senior programmer gets hit by a bus. If the project didn’t use a library like SDL, then the application would be tied to a specific set of operating system libraries, or it would need to provide an assortment of implementation modules for each OS target that followed a nice, clean little interface… like the ones provided by SDL.

I’ll pause while you give a big hug to the people working on that project. Don’t you feel better now? Wait, it wouldn’t be right to ignore DOSBox, since they are the stars of this little side show. Let’s spread the love around and try not to get too messy in the process.

The scaler layer. Strictly speaking, I wouldn’t really call this a layer, but the architecture does abstract it somewhat, and a lot of people like this feature so it’s worth discussing it a bit. When you decide to fire your favourite game up in DOSBox, you may notice the Window it creates is a little on the small side depending on your host’s current resolution. Wouldn’t it be nice if you could make the window bigger and still have it look good? That’s the job for the scaling routines. They take what would normally be a pixelated image (assuming you don’t like that sort of thing) and smooth out some of the rough spots. As with most scaling routines (other than piecewise-constant algorithms like nearest neighbour), there can be a bit of blurring but if the algorithms use a smaller set of surrounding pixels for their sample set, like an EPX or Scale2x routine then the result looks quite good and still maintains an acceptable level of sharpness and detail.

The shell layer. The shell provides a sub-set of the total native keyword commands available to DOS: DIR, CHDIR, ATTRIB, CALL, CD, CHOICE, CLS, COPY, DEL, DELETE, ERASE, ECHO, EXIT, GOTO, HELP, IF, LOADHIGH, LH, MKDIR, MD, PATH, PAUSE, RMDIR, RD, REM, RENAME, REN, SET, SHIFT, SUBST, TYPE, and VER. It also provides an execution environment for running batch files.

Hopefully, when you fire up your next DOSBox powered game (a number of product use this software, including game services like Steam), you’ll think of the long hours and tedious bits of programming that went into developing this stellar product, and maybe choose to send a bit more love their way this Christmas season.

Categories: DOS, Programming, Software

No Comments »

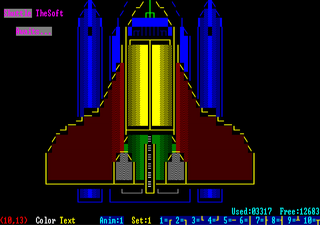

TheDRAW!

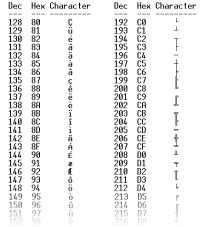

July 18, 2008There are two ways to render graphics in DOS. The first involves setting the desired graphics mode and then drawing pixels on the screen via the BIOS or writing directly to video memory (an interesting topic by itself); the second method involves staying in text mode and drawing pictures with the standard set of ASCII or IBM’s Extended ASCII characters (thanks to Telecom Corner for the chart).

While scripting batch files, you can also make use of ANSI escape sequences to control the cursor, display coloured or blinking text, etc. In case your interested, DOS requires an ANSI driver in order to translate these escape sequences. These escape sequences are non-intuitive and look something like this:

ESC[=5;7h

Ahh. Isn’t it cute? Many people have been discreetly killed for creating less offensive syntax than that. In addition to the regular set of ASCII characters which can be used in creative and artistic ways to produce a recognizable picture, using the set of extended ASCII characters provides an easy way to draw connected lines within the grid of displayable characters. When creating your picture keep in mind the screen size, since this is dependent on the video mode (some video modes can display more rows and columns).

TheDRAW was written by Ian E. Davis and was last released in October of 1993. It is a program which allows you to paint a picture or create an animation using these special characters and ANSI attributes. It’s not like painting individual pixels, you are limited by the set of characters available. However, this hasn’t stopped ASCII artists from creating fantastic content. The most stunning examples I found were on Bulletin Board Systems. They were often themed according to design of the on-line system. Using TheDRAW, these pictures could be exported as ANSI-compliant or ASCII text files, or as header files to be used in other programming languages. There are a number of small tools available for translating these files into other formats used by other languages.

TheDRAW was written by Ian E. Davis and was last released in October of 1993. It is a program which allows you to paint a picture or create an animation using these special characters and ANSI attributes. It’s not like painting individual pixels, you are limited by the set of characters available. However, this hasn’t stopped ASCII artists from creating fantastic content. The most stunning examples I found were on Bulletin Board Systems. They were often themed according to design of the on-line system. Using TheDRAW, these pictures could be exported as ANSI-compliant or ASCII text files, or as header files to be used in other programming languages. There are a number of small tools available for translating these files into other formats used by other languages.

TheDRAW package is bundled with a utility called TheGrab which can take an screen shot of a running program. It’s not your typical screen shot utility which takes snap shots of any graphics screen. This program works only for text mode screens and will output a file in ANSI, ASCII, COM, or TheDRAW format files. Be wary of using this memory resident utility under DOSBox 0.72 as it will crash and force you to end your session.

Last but not least, TheDRAW package is bundled with a utility for creating your own fonts to be used in TheDRAW paint program. This is without a doubt one of my favourite features and makes creating screens a breeze. There are many fonts available in the wide, wide world so have fun.

All of these features are thuroughly explained in the documentation and in-program help. Many of my programs took on a more professional and fun look because of this drawing tool; although I think I went overboard in some cases. I still find it the best tool available for doing this sort of work in DOS, although I’m sure many of you may prefer other software such as AcidDRAW.

Categories: DOS, Reflections, Software, Uncategorized

1 Comment »

PGP: Source Code and Internals

April 28, 2008 My copy finally arrived the other day and I am elated. Now, before you make another hasty buying decision based solely on my opinion, and on a single line of text, there are a couple of things worth mentioning about the book. First and foremost, the book does lack a few structural elements like plot and little things like paragraphs. Having read about the history of Philip Zimmerman and his toil with the U.S. government, I already knew this before I purchased the book.

My copy finally arrived the other day and I am elated. Now, before you make another hasty buying decision based solely on my opinion, and on a single line of text, there are a couple of things worth mentioning about the book. First and foremost, the book does lack a few structural elements like plot and little things like paragraphs. Having read about the history of Philip Zimmerman and his toil with the U.S. government, I already knew this before I purchased the book.

It does contain source code. Lots of code. The entire PGP program, in fact, including the project files. The book was sent to print because the digital privacy laws the government was attempting to enact at the time, did not cover the printed page. If all you want is the source code, you can simply find it on the Internet. Looking for a specific version, especially an older version, is more difficult and may be fraught with export restrictions.

The story behind the printing of the book is a fascinating history lesson and one we should all be concerned about, even if you live in another country, since we all know governments are not terribly adept at learning from their mistakes.

Categories: Books, Programming, Software

No Comments »

TheDraw for DOS

March 10, 2008There are two ways to display graphics in DOS. The first involves getting out of text mode and setting the desired graphics mode and then drawing pixels on the screen via the BIOS or writing directly to video memory; the second method involves drawing with the set of ASCII or extended ASCII characters. You can also make use of ANSI escape sequences to control the cursor, display coloured or blinking text, etc. DOS requires an ANSI driver in order to translate these escape sequences. These character codes are non-intuitive and look something like this: ESC[=5;7h. I swear my cat coughed up a fur ball which looked exactly like that the other day.

The set of extended ASCII characters provides an easy way to draw connected lines within the grid of displayable characters. This grid changes in size depending on the video mode set. With the default video mode in DOS, the dimensions of this grid is usually 80 characters wide by 25 characters high and covers the entire screen. Naturally, these characters can be connected to form anything from boxes to mazes. It’s how all those menus and dialogs are drawn when using a program like QBasic.

TheDRAW is a program which allows you to paint a picture using a variety of special characters. It’s not like painting individual pixels, you are limited by the set of characters available. However, this hasn’t stopped ASCII artists from creating fantastic content. The most stunning examples I found were on Bulletin Board Systems which used them to attract new and repeat visitors alike. The ANSI art found on those systems were often themed according to design of the on-line system or the whim of the system operator. Using TheDRAW, these pictures could be exported as ANSI-compliant or ASCII text files, or as header files to be used in other programming languages. There are a number of small tools available for translating these files into other formats used by other programming languages.

Many of my programs in QuickBasic took on a more professional and uniform look because of this spectacular drawing tool. I still find it the best tool available for doing this sort of work in DOS, although I’m sure many of you may prefer other programs.

When I began to write software using The C Programming Language, I used TheDRAW to create a title screen in a number of small programs (the concept of a splash screen hadn’t been introduced yet). Although most dialogs and windows I used were crafted in code using a custom built user interface library, the more complex dialogs were created using this application. To allow for input or interaction, the fields were marked by a special character, followed by a small series of alpha-numeric characters. These markers would be found by the interface library and the characters following the marker would correspond to a header file which linked the “names” to constants and then to user interface controls and data structures.

The output from TheDRAW was compressed and archived in a separate resource file to keep them from being easily pillaged by unworthy scavengers. I’m not an artist so it may have been overkill.

Categories: DOS, Software

1 Comment »

GW-BASIC

November 29, 2007 I first discovered this little gem while poking around on my Tandy 1000 RL computer back in 1991. Because I was familiar with various versions of BASIC already, I was able to fire it up and immediately begin writing fairly simple applications. However, there were differences to Atari’s version of BASIC, and no discernable way to figure that out without a book or some other documentation. I poked around on a few of my favourite Bulletin Board Systems (BBS) and found a small cache of programs written in GW-BASIC. I downloaded each of them, spending all of my download credits in the process. I poured over them line by line and found myself having more questions than answers.

I first discovered this little gem while poking around on my Tandy 1000 RL computer back in 1991. Because I was familiar with various versions of BASIC already, I was able to fire it up and immediately begin writing fairly simple applications. However, there were differences to Atari’s version of BASIC, and no discernable way to figure that out without a book or some other documentation. I poked around on a few of my favourite Bulletin Board Systems (BBS) and found a small cache of programs written in GW-BASIC. I downloaded each of them, spending all of my download credits in the process. I poured over them line by line and found myself having more questions than answers.

Many new programmers today expect there to be some sort of documentation available when they learn a new technology. It’s an expectation that has evolved over the years. Today, it would be practically unheard of if you couldn’t find some resource describing the software on the Internet, or some blurb in a book, or even bundled with the product. However, when I started looking for a book on GW-BASIC (version 3.23 to be exact), it was darn near impossible. The people I spoke with had no understanding of programming, let alone a specific language. The computer sections in the store were spartan compared to the sections you find in stores today. In fact, several years later, I still needed to special order the books I wanted through the book store; even today, I usually order my titles through Amazon since a store like Indigo usually doesn’t have them in stock.

I eventually wandered into a local computer store – I was attracted by a demo of Wing Commander II playing on one of their expensive new 486 machines, so I went in to ask them if they knew where I could get my hands on some material. I spoke with the owner and he seemed to recall seeing a book for BASIC in the back of the store. He left for a couple of minutes and returned with two books under his arm. One was for the exact version of GW-BASIC I was looking for and the other was a compatible book for MS-DOS. I was ecstatic and I nearly fainted when he gave them to me for free. I don’t remember what I said to the man that day, but I’m sure it wasn’t adequate.

I wrote so many programs in that environment. I think I still have a few of them today on floppy diskette. They were simple at first, like simple word games such as hangman. However, it wasn’t long before I discovered how to increase the resolution and colour depth and draw simple graphics. I had already written code for the Atari which made use of pixel plotting routines or simple geometric shapes. There were pre-canned routines the Atari provided for drawing shapes, along with a few parameters for style, colour, and shape. GW-BASIC was similar but provided several more commands and options. With a smaller font and a higher resolution, debugging within BASIC became more feasible. I still couldn’t scroll, but at least I could view a larger portion of the code on the screen.

Sound was also possible through the PLAY command, or if you were so inclined, through a custom routine written in assembly language which could be written to generate all sorts of sounds like noise or even speech. I had converted several songs using music sheets and converted them to their GW-BASIC equivalent. Not terribly exciting, but it did make for some creative demos which seemed to impress onlookers.

Some of the more interesting projects involved controlling external devices like printers. I must have programmed my software to use every conceivable option available through my Star NX 1000 II dot-matrix printer. I could instruct it to use standard type effects like bold and italics when printing text, but I could also program it to print graphical data like images and shapes. I even created printed graphics for the Mandel Brot set by rendering the fractal to my printer instead of the screen, one pixel at a time.

Perhaps the most interesting device was a robotic arm which was controlled through a series of commands dispatched through the parallel port. I didn’t have access to such a device at home but my school eventually purchased one, so one of the teachers decided to create a contest for students. Whoever could program the robotic arm first, would win a special prize. The task was to pick up a piece of chalk and draw a picture consisting of at least four shapes. It was a fun contest and I walked away with a pass which granted me as much lab time as I wanted.

Before I upgraded to a new machine and moved to a new version of MS-DOS, I was intimately familiar with every command in that book, even the more obscure commands like CHAIN and PCOPY. Although, the really obscure commands were still a mystery. I didn’t know you could actually write assembly language routines in GW-BASIC using DEF USR and USR commands until sometime later.

Categories: PC, Programming, Reflections, Software

No Comments »

Amiga OS

November 27, 2007 At around the same time I discovered DeskMate, the Amiga OS made its entry into the market as the aptly named operating system for the Amiga computer. Until recently, I had never owned an Amiga machine. I spent a fair amount of time with friends mucking around with various bits of software and hardware. One of my favourite combinations was the NewTek Video Toaster which was a powerful video editing software kit. You could do all sorts of video effects and overlays due to a technology called genlock. Genlock allowed you to synchronize different signals so that their sources become coincident, which made it possible for you to place blackout bar over your sister’s face just for kicks.It also sported several hardware and software features which I had never seen before. Real hardware multi-tasking was available on the Amiga 1000, but we mostly used the Amiga 500 which wasn’t as sophisticated in the same areas but provided terrific hardware for games. A friend of mine had a massive collection of software which were all archived in a custom-built diskette storage case made of wood and decaled with the Amiga logo. When we weren’t playing games and slogging through his massive collection of software, we were programming in Amiga BASIC or the occasional dip into the Lattice C compiler. I didn’t really understand the compiler since it was my first experience with a compiler, and it’s really difficult to learn a new programming technology when you have no means to experiment in your free time and no books available to read. At the time, I really wasn’t that interested anyway because I really connected with the structured BASIC available on the Amiga. It was a technology I could more readily understand because of my experience with Atari’s BASIC and Tandy’s GW-BASIC.

At around the same time I discovered DeskMate, the Amiga OS made its entry into the market as the aptly named operating system for the Amiga computer. Until recently, I had never owned an Amiga machine. I spent a fair amount of time with friends mucking around with various bits of software and hardware. One of my favourite combinations was the NewTek Video Toaster which was a powerful video editing software kit. You could do all sorts of video effects and overlays due to a technology called genlock. Genlock allowed you to synchronize different signals so that their sources become coincident, which made it possible for you to place blackout bar over your sister’s face just for kicks.It also sported several hardware and software features which I had never seen before. Real hardware multi-tasking was available on the Amiga 1000, but we mostly used the Amiga 500 which wasn’t as sophisticated in the same areas but provided terrific hardware for games. A friend of mine had a massive collection of software which were all archived in a custom-built diskette storage case made of wood and decaled with the Amiga logo. When we weren’t playing games and slogging through his massive collection of software, we were programming in Amiga BASIC or the occasional dip into the Lattice C compiler. I didn’t really understand the compiler since it was my first experience with a compiler, and it’s really difficult to learn a new programming technology when you have no means to experiment in your free time and no books available to read. At the time, I really wasn’t that interested anyway because I really connected with the structured BASIC available on the Amiga. It was a technology I could more readily understand because of my experience with Atari’s BASIC and Tandy’s GW-BASIC.

The Amiga OS made a strong impression on me as to what I was missing when I used a personal computer running DOS or Windows. There really wasn’t any comparison at the time. I remember feeling a bit underwhelmed whenever I switched my machine on after returning from a night of Amiga fun. However, after a few hours I was back into full swing. I’m not sure if I came to the realization that the machine I owned presented me with all sorts of different puzzles and tantalizing delights, but I do remember feeling like there was so much more to explore and learn. I believe that is what kept me from begging my folk’s for an Amiga every time I returned home. Although, I might have asked once or twice…

Categories: Amiga, Reflections, Software

No Comments »

Despite Tandy’s DeskMate application which tried to handle a number of disk and application related functions, MS-DOS (Microsoft Disk Operating System) was the actual operating system on which Deskmate needed to operate. I had experience with an earlier version of Microsoft’s DOS which I used at school; I believe the versions were 2.0 and 1.25. Unlike today where hard drives are common place and often taken for granted, the machines in the lab could only boot by way of 5 1/4″ floppy diskettes which contained the operating system. I believe the Tandy computer came with MS-DOS 3.42 and it was pre-installed on the hard drive. This was so much better than booting from a floppy, and I quickly assimilated as many commands as I could, since the school never gave me the time I wanted on the machine or the resources. Even with the aid of a text book, there were a few commands which took me a while to grasp, like COMMAND.COM or DEBUG.COM. My understanding of these wouldn’t completely solidify until I started programming in lower level languages like C and assembly language. I was also introduced to Microsoft’s shell scripts which they called Batch files. With the addition of extra software on the system, these scripts could actually become fairly sophisticated. Before I moved on to greener pastures and more flexible user interfaces, my system had sophisticated menus and launchers which helped my family out when they wanted to use the machine (it wasn’t often, but it did happen).

Despite Tandy’s DeskMate application which tried to handle a number of disk and application related functions, MS-DOS (Microsoft Disk Operating System) was the actual operating system on which Deskmate needed to operate. I had experience with an earlier version of Microsoft’s DOS which I used at school; I believe the versions were 2.0 and 1.25. Unlike today where hard drives are common place and often taken for granted, the machines in the lab could only boot by way of 5 1/4″ floppy diskettes which contained the operating system. I believe the Tandy computer came with MS-DOS 3.42 and it was pre-installed on the hard drive. This was so much better than booting from a floppy, and I quickly assimilated as many commands as I could, since the school never gave me the time I wanted on the machine or the resources. Even with the aid of a text book, there were a few commands which took me a while to grasp, like COMMAND.COM or DEBUG.COM. My understanding of these wouldn’t completely solidify until I started programming in lower level languages like C and assembly language. I was also introduced to Microsoft’s shell scripts which they called Batch files. With the addition of extra software on the system, these scripts could actually become fairly sophisticated. Before I moved on to greener pastures and more flexible user interfaces, my system had sophisticated menus and launchers which helped my family out when they wanted to use the machine (it wasn’t often, but it did happen).

Version 5 was a much more refined operating system and contained powerful commands and programs like QBasic. It also featured a memory manager which allowed software to access all of that wonderful new memory that you may have been fortunate enough to acquire. Although it was included in MS-DOS Version 4, I believe the DOSSHELL command’s popularity spiked in Version 5. This was a program which was intended as a graphical replacement to the dull COMMAND.COM shell. It introduced a feature called task swapping which allowed you to load more than one program but only run one of them at a time; unlike multitasking which appears to run more than one program at the same time. Also, DOSSHELL could not load more programs than your memory would allow since it did not have a paging mechanism. Naturally, all of these benefits conflicted with the new versions of Windows which did exactly the same thing only better, and was soon relegated to a supplemental disk in future releases before being dropped entirely.

Version 6 introduced the much feared DELTREE command. DELTREE was a command which could recursively remove directories and files; it was so feared because new or untrained users would presumably delete their entire operating system accidently. If this was indeed a problem, I think they should have modified the program to make it more difficult to accidently trash your operating system by adding extra prompts and warnings for example, since it is a very useful command in some circumstances. Despite its notoriety, it was a fairly simple command as far as software goes, and the void was soon filled by programmers who released new versions on the Internet which did exactly the same thing only better; I wrote a utility to replace it as well but mine displayed a user interface if you specified the right command option.

Categories: DOS, Reflections, Software

No Comments »